🔑 Key Takeaways

• The Event: Memory leadership is moving from capacity scale to architectural differentiation.

• The Cause: AI workloads expose physical limits of traditional DRAM and HBM stacking.

• The Implication: Future competitiveness will depend on memory structure, not just wafer volume.

🚀 Opening

For four decades, memory has been a scale business. Wafer starts, yield learning, and capital intensity determined winners. Today, AI computing is reshaping this equation. As bandwidth, latency, and thermal constraints collide, memory innovation is no longer just about how much is built, but how it is architected.

📉 What’s Changing

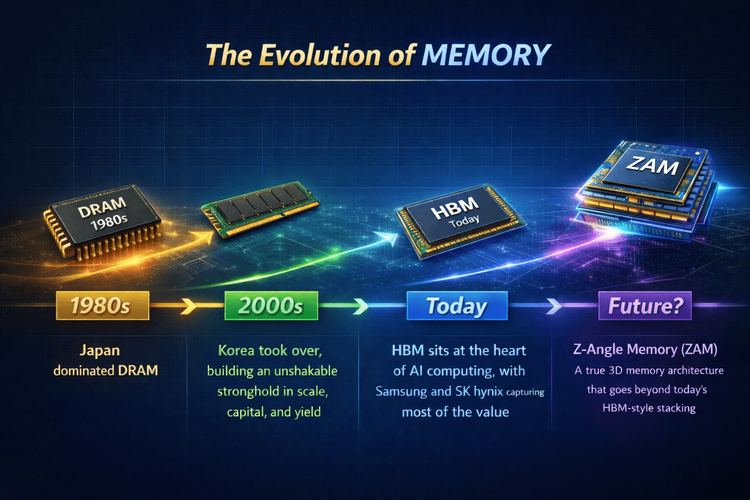

The memory industry has evolved in distinct phases rather than a linear path.

📌 1980s — Japan led DRAM through process discipline and quality control.

📌 2000s — Korea consolidated dominance, with Samsung and SK hynix building unmatched advantages in scale, capex efficiency, and yield learning.

📌 Today — High Bandwidth Memory (HBM) sits at the core of AI accelerators, capturing disproportionate value as GPUs and custom ASICs become bandwidth-hungry.

Yet HBM itself is approaching physical stress points: taller stacks, longer vertical interconnects, and increasing thermal density.

📊 Data / Structural Comparison

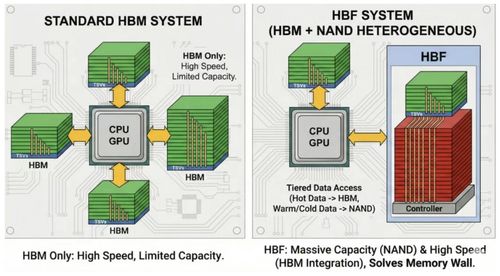

Traditional DRAM scaling focused on bit cost reduction through node shrinks.

HBM improved performance by vertical stacking and wide I/O, but still relies on layered dies and TSV-based interconnects.

Emerging 3D memory concepts aim to shorten data paths laterally and vertically, reducing signal delay while easing heat dissipation—an architectural, not volumetric, advantage.

🧠 Why Old Assumptions No Longer Work

The assumption that more layers automatically equal better performance is breaking down.

AI training and inference workloads amplify memory bottlenecks faster than transistor scaling can compensate. Power density, thermal throttling, and latency variability are now system-level constraints. Under these conditions, simply adding stacks or capacity delivers diminishing returns.

🔍 A Different Bet: Architecture Over Volume

Against this backdrop, SoftBank-backed SAIMEMORY, reportedly working with Intel, is exploring Z-Angle Memory (ZAM).

ZAM proposes a true 3D memory architecture, not just stacked dies, rethinking how memory cells and interconnects are spatially organized.

The theoretical benefits are straightforward:

Shorter data paths translate into lower latency.

Improved thermal behavior supports sustained performance.

Higher structural scalability reduces reliance on extreme stacking.

🏭 Implications for OEM, EMS, and Procurement Teams

HBM will not be displaced in the near term. Its ecosystem, yields, and qualification cycles are deeply entrenched.

However, architectural diversification introduces new variables into long-term roadmaps:

• BOM planning must account for packaging and thermal trade-offs, not only capacity.

• Supplier risk assessments should include architectural dependency, not just fab scale.

• Design teams will need tighter co-optimization between memory, logic, and packaging.

🚧 How Smart Teams Are Responding

Leading OEM and EMS teams are tracking non-mainstream memory architectures early, even before commercialization.

They are stress-testing AI workloads against latency and thermal ceilings, not peak bandwidth alone.

Most importantly, they are treating memory architecture as a strategic design choice, not a commodity input.

🔚 Closing

HBM defines the present of AI computing. Architecture will define its future.

In the next phase of memory evolution, how memory is built may matter as much as how much is built.

Forward-looking teams are already planning for that shift.