Key Takeaways • The Event: Google introduces TPU v8 (8t for training, 8i for inference), splitting architectures by workload stage. • The Cause: AI models are shifting toward MoE, long-context reasoning, and agent-based systems with new bottlenecks. • The Implication: Infrastructure strategies must move from general-purpose scaling to workload-specific optimization.

🚀 Opening AI infrastructure is no longer constrained by raw compute alone. As models evolve toward mixture-of-experts and agent-based reasoning, bottlenecks are shifting to memory bandwidth, interconnect latency, and data orchestration. Google’s TPU v8 architecture signals a structural shift: separating training and inference hardware to maximize efficiency across the AI lifecycle. This article examines what changed—and why it matters for OEM and EMS system design.

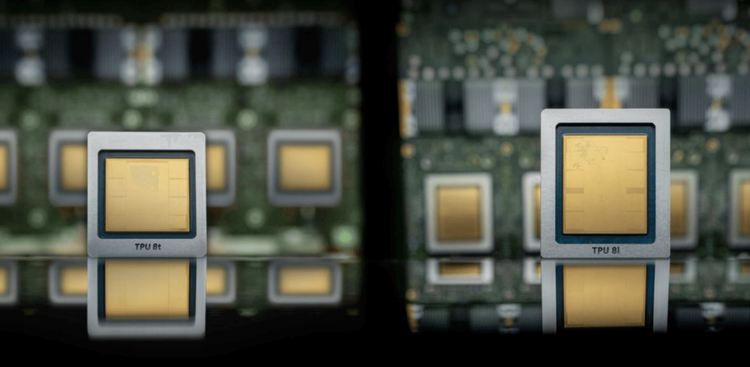

📊 What’s Changing The TPU v8 generation introduces two distinct systems: TPU 8t for large-scale pre-training and TPU 8i for inference and sampling workloads. This marks a departure from unified accelerator strategies.

TPU 8t focuses on throughput scaling. It integrates up to 9,600 chips per supernode and introduces SparseCore to offload irregular memory operations such as embedding lookups. Native FP4 support reduces memory bandwidth pressure while doubling matrix throughput.

TPU 8i, by contrast, is optimized for latency-sensitive inference. It introduces a Collective Acceleration Engine (CAE) to reduce synchronization overhead and a new Boardfly topology to minimize communication hops across chips.

At the system level, Google also redesigned networking with Virgo (for scale-out training) and Boardfly (for low-latency inference), reflecting diverging workload characteristics.

📈 Data/Comparison Compared with the previous generation (Ironwood TPU):

Training efficiency TPU 8t delivers approximately 2.7× improvement in cost-performance.

Inference efficiency TPU 8i achieves around 80% improvement, particularly for MoE models.

Energy efficiency Both architectures deliver up to 2× performance per watt.

Network scaling Inter-chip bandwidth increases 2×, while data center network bandwidth scales up to 4×.

Latency reduction Boardfly reduces network diameter from 16 hops (3D torus) to 7 hops, cutting tail latency by ~56%.

⚠️ Why Old Assumptions No Longer Work Traditional accelerator design assumed uniform workloads dominated by dense matrix operations. That assumption breaks under modern AI conditions.

First, MoE architectures introduce sparse, dynamic routing patterns that stress memory systems rather than compute units. Second, long-context inference shifts the bottleneck to KV cache access and synchronization latency. Third, agent-based systems require iterative reasoning loops, amplifying communication overhead.

A single architecture optimized for peak FLOPS can no longer deliver optimal system-level efficiency. Instead, performance depends on aligning hardware topology, memory hierarchy, and interconnect design with workload characteristics.

🏭 Implications for OEM / EMS / Procurement For hardware architects and sourcing teams, TPU v8 reflects a broader industry direction rather than a Google-specific anomaly.

System design Expect increasing divergence between training clusters and inference clusters, requiring different interconnects, memory configurations, and thermal profiles.

BOM complexity Specialized accelerators (e.g., SparseCore, CAE) imply tighter integration between compute dies and system-level architecture, reducing interchangeability of components.

Supply chain planning Networking components (optical switches, high-radix fabrics) and advanced packaging will become critical cost drivers, not just GPUs/ASICs.

Power and cooling Higher efficiency per watt does not reduce total power demand; instead, it enables denser deployments, increasing rack-level thermal challenges.

🔧 How Smart Teams Are Responding Leading OEM and EMS teams are already adapting in several ways.

They are separating infrastructure roadmaps into training vs inference platforms, rather than pursuing unified clusters.

They are investing in interconnect-aware system design, prioritizing topology (e.g., Dragonfly-like, flattened networks) as a first-order decision rather than an afterthought.

They are collaborating earlier with silicon providers to align board design, memory layout, and software stack compatibility.

They are also reevaluating procurement strategies, shifting focus from chip cost to system-level TCO, including networking, storage bandwidth, and orchestration overhead.

🔒 Closing TPU v8 is not just a generational upgrade—it reflects a structural shift toward workload-specific AI infrastructure. As model architectures continue to evolve, the winning systems will not be those with the highest peak compute, but those with the most balanced end-to-end efficiency. For teams building next-generation platforms, the question is no longer “how to scale,” but “what to specialize.” Reach out to discuss resilience in your AI hardware roadmap.